Snap! Websites

An Open Source CMS System in C++

I test load with various tools, but most will just send many requests all at once without much control over the rate at which the hits are being sent.

Today I was reading a web post about nginx mirroring and the author mentioned a tool named "hey". It is actually written in Go and can be found here:

This tool has options such as the -q to limit the number of requests per second. The -c is also important, it offers a way to define the number of concurrent requests. You can also use the -t option to define a timeout other than the default of 20 seconds ...

Lately, I have been reading about port knocking and saw many posts saying that it is not that safe and therefore rather useless since it adds the burden of knocking before you can connect with a tool such as ssh (for which port knocking is most often implemented).

The fact is that isn't true. It is perfectly safe if you use the proper tools to setup the port knocking and execute it. In fact, wikipedia talks about it and clearly tells you that port knocking with just 3 ports generates some 240+ trillion possibilities which pretty much no hacker can hope to ever break.

The snapwebsites ...

As I've been working on an MP3 decoder/encoder system capable of decoding and encoding MP3 data in parallel, I've had the chance of testing that system with a plethora of worker threads (my main server has 64 CPUs).

Along the way, I've got many surprises.

First of all, some jobs, even though I have 64 CPUs, do not make use of much more than 8 CPUs. That is, whether I run with 64 or 8, the result is that I get the task done in about the same amount of time. However, when using just 8 CPUs, I can run the command 8 times in parallel and therefore process 8 files ...

In the last few days, I worked with, let's call him John, who read my post on my DNS issues in Aug 15, 2020 (I was spammed pretty bad by an amplification DNS attack). John wanted to run iplock on his system because it's much more flexible than directly using iptables with fail2ban. So I made sure that it would compile on Ubuntu 20.04 and he made it work on his system by also copying the dependencies (i.e. the shared libraries).

Here we present John's log on how to install iplock on a computer from a compiled version, opposed to directly from a package. The problem is that I ...

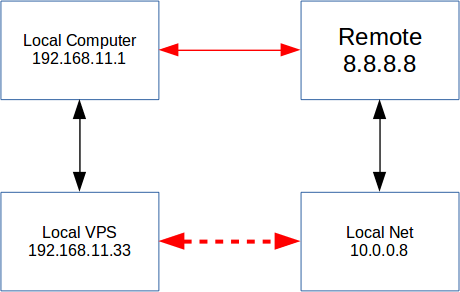

Today I had a need to connect my local VPS at my office to a VPS at DigitalOcean. Not only that, the DigitalOcean runs the service I wanted to connect to on a separate network. Here is an example of the network (the IPs are fake, of course):

Today I created a new release, version 1.8. You can find it on github.

This includes a ton of bug fixes that 1.7 was plagued with. It also includes new code (thread pool/thread worker) and especially, a break up of several objects from the libsnapwebsites to their own contrib projects.

This includes:

In the last two days, I worked on a Jira task I had assigned to me in link with shredding files. Whenever you purge our project, it deletes a lot of files, including all the logs, configuration files, etc. By default, though, the purge command will just do:

rm -rf <path> ...

This generally works, only it is much better if you can also make sure that the content of the file is not recoverable. Under Unix systems, it often has been close to impossible to recover files because the OS was very quick at over reusing the just released blocks for new data. Not ...

When I first start of Snap!, I had one project, the snapwebsites folder.

As I started growing the project, I wanted to have some features that required other modules such as the log4cplus library to handle logging.

The fact is that as a result the library (read: common code to all the Snap! Website services) as grown to be a really large one! Difficult to manage and each time a small change is made, we incur a long wait for rebuilding everything.

So we started working on breaking up the library in smaller parts. We already have some functional projects which now encompass some of the low ...

I updated the old version of zipios to version 0.1.7 as a DoS bug was found in the older version (0.1.5).

The bug was found and fixed by Mike Salvatore of Salvator Security.

I noticed another potential problem with a second loop, so I enhanced the patch a bit.

Mike got CVE-2019-13453 registered. He also made a post about how the bug was discovered and fixed.

If you are using any version of Zipios++ version 0.1.5 (or the CVS source code) or any of the older versions, you want to upgrade to version 0.1.7 as soon as possible. The interface is exactly the same so the upgrade should be ...

Today I noticed hundreds of logs in the snapwatchdog services. These appear because the daemon checks whether clamav-freshclam is enabled. This is a daemon used to make sure fresh virus signatures are uploaded at least once a day.

Aug 23 18:14:42 hostname snapwatchdogserver[10305]: Failed to get unit file state for clamav-freshclam.service: No such file or directory

The snapwatchdog service runs its tests about once a minute. This means we check whether the clamav-freshclam service is enabled once a minute. That's 1,440 times a day, assuming we don't lose even one minute. ...

Snap! Websites

An Open Source CMS System in C++